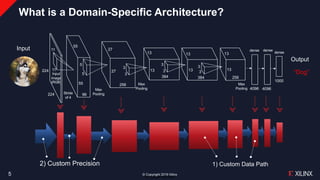

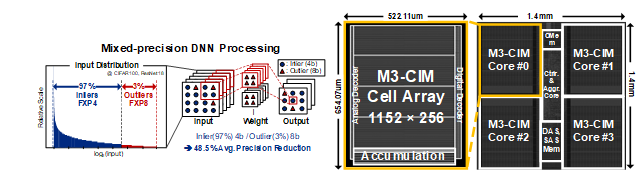

![PDF] A 0.3–2.6 TOPS/W precision-scalable processor for real-time large-scale ConvNets | Semantic Scholar PDF] A 0.3–2.6 TOPS/W precision-scalable processor for real-time large-scale ConvNets | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/f2dd73ae127c5ee3713a92e1057eddea92fbf207/2-Figure1-1.png)

PDF] A 0.3–2.6 TOPS/W precision-scalable processor for real-time large-scale ConvNets | Semantic Scholar

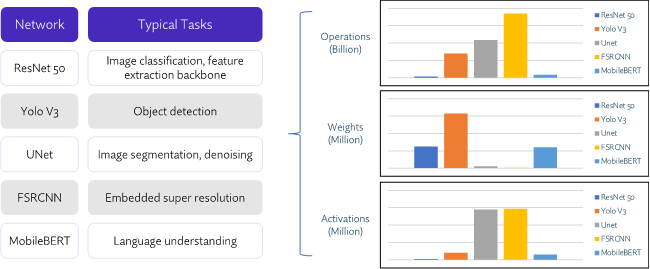

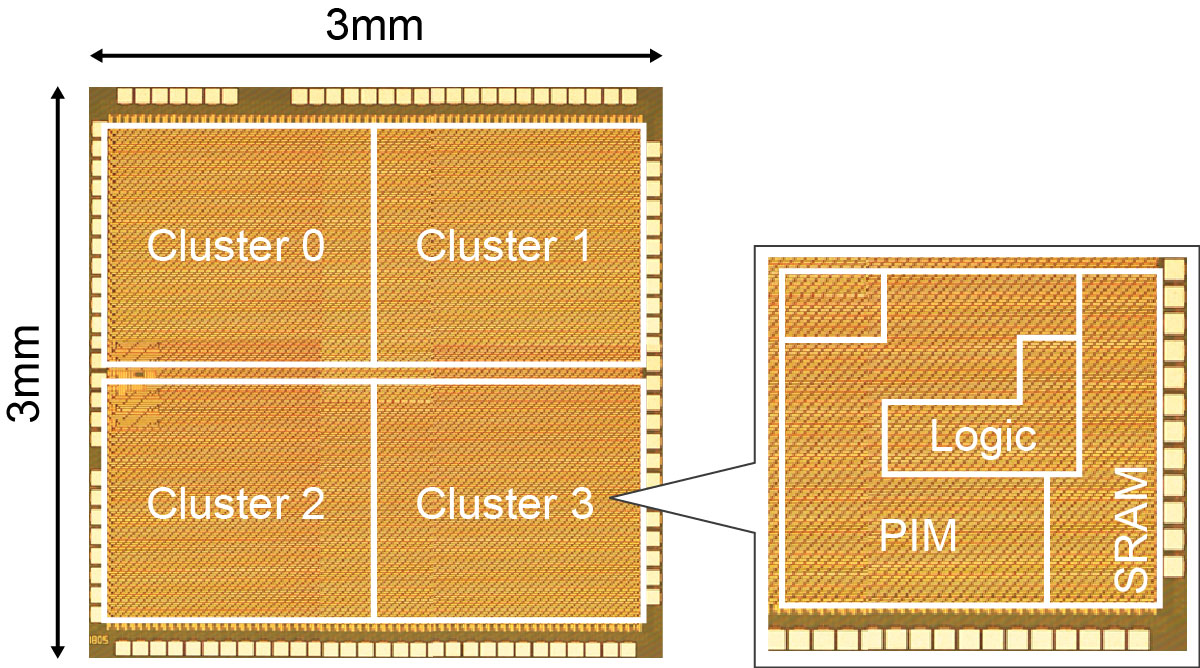

A 161.6 TOPS/W Mixed-mode Computing-in-Memory Processor for Energy-Efficient Mixed-Precision Deep Neural Networks (유회준교수 연구실) - KAIST 전기 및 전자공학부

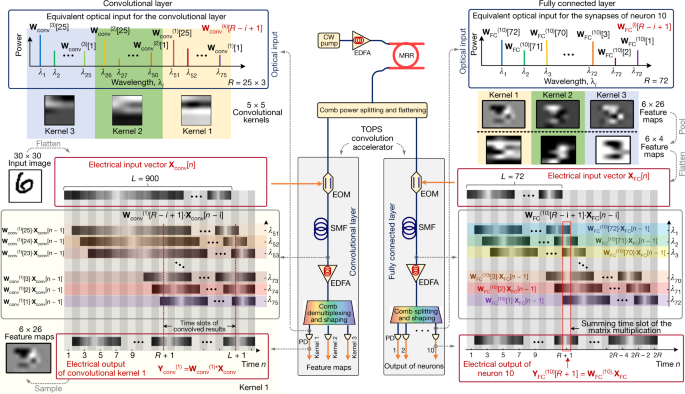

![PDF] A 3.43TOPS/W 48.9pJ/pixel 50.1nJ/classification 512 analog neuron sparse coding neural network with on-chip learning and classification in 40nm CMOS | Semantic Scholar PDF] A 3.43TOPS/W 48.9pJ/pixel 50.1nJ/classification 512 analog neuron sparse coding neural network with on-chip learning and classification in 40nm CMOS | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/a2e283532b71e9b6af7addb3b3f4f4a1af6e0fb4/2-Figure1-1.png)

PDF] A 3.43TOPS/W 48.9pJ/pixel 50.1nJ/classification 512 analog neuron sparse coding neural network with on-chip learning and classification in 40nm CMOS | Semantic Scholar

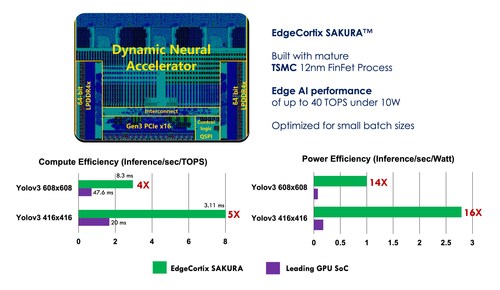

EdgeCortix Announces Sakura AI Co-Processor Delivering Industry Leading Low-Latency and Energy-Efficiency | EdgeCortix